To the best of our knowledge, this work is the first study to utilize Moreau envelope-based reformulation of BLO, to design a single-loop and Hessian-free gradient-based algorithm with non-asymptotic convergence analysis for general BLO problems with potentially nonconvex and nonsmooth LL objective functions:

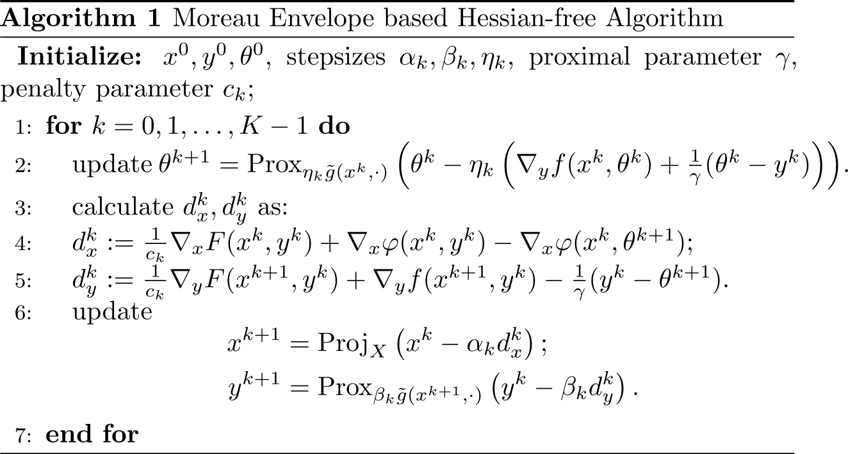

- We propose the Moreau Envelope based Hessian-free Algorithm (MEHA), for general BLO problems with nonconvex and probably nonsmooth LL objective functions. MEHA avoids second-order derivative approximations related to the Hessian matrix and can be implemented efficiently in a single-loop manner, enhancing its practicality and efficiency for large-scale BLOs.

- We provide a rigorous analysis of the non-asymptotic convergence of MEHA under milder conditions, avoiding the need for either the convexity assumption or the PL condition on LL problem. In the context of the smooth BLO scenario, our assumption simplifies to UL and LL objective functions being L-smooth.

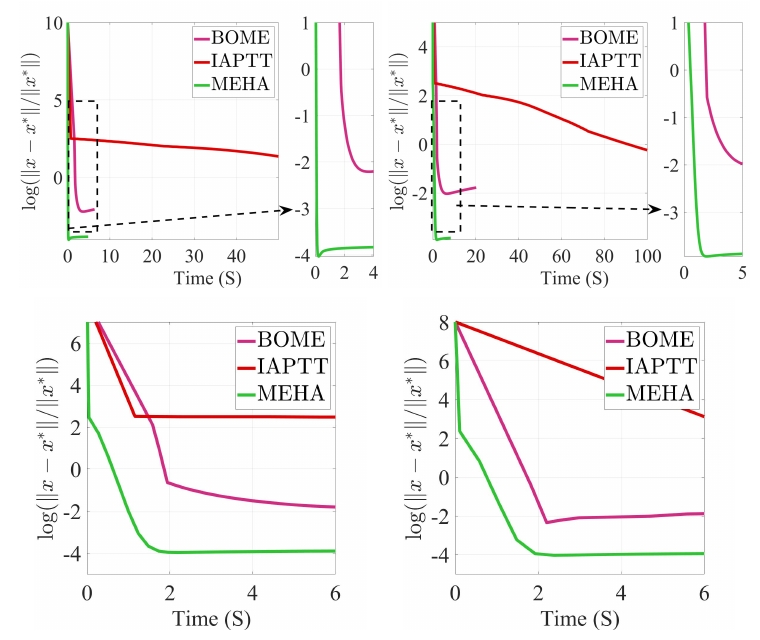

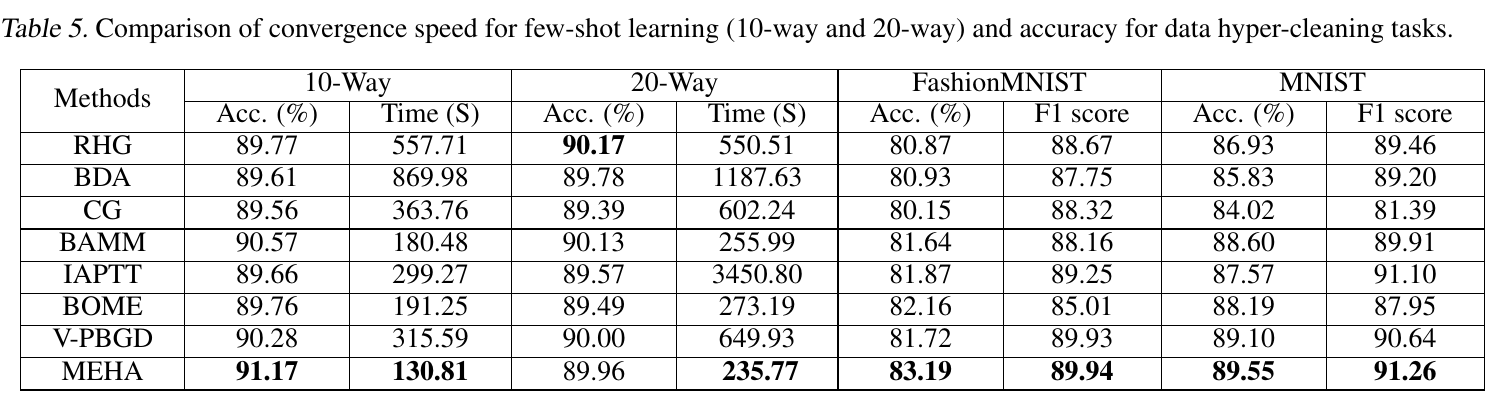

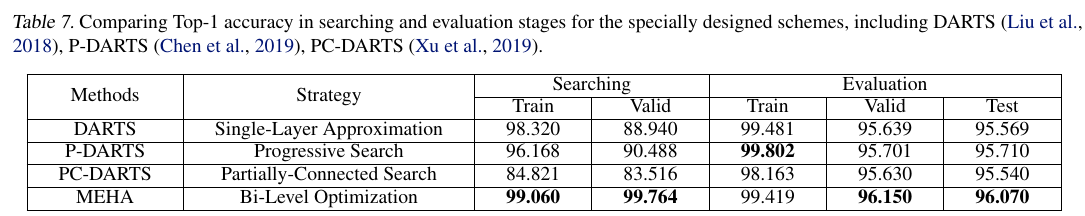

- We validate the effectiveness and efficiency of MEHA on various synthetic problems, two typicial hyper-parameter learning tasks and the real-world neural architecture search application. These experiments collectively substantiate its superior performance.